Slurm batch script example

SuspendTime=None SecsPreSuspend=0 LastSchedEval=T09:53:11 StartTime=Unknown EndTime=Unknown Deadline=N/A Requeue=1 Restarts=0 BatchFlag=1 Reboot=0 ExitCode=0:0 JobState=RUNNING Reason=Resources Dependency=(null) Priority=191783 Nice=0 Account=nmsu QOS=normal TRESUsageOutMinNode TRESUsageOutMinTask TRESUsageOutTot UID TRESUsageOutMax TRESUsageOutMaxNode TRESUsageOutMaxTask TRESUsageOutMin TRESUsageInMinNode TRESUsageInMinTask TRESUsageInTot TRESUsageOutAve TRESUsageInMax TRESUsageInMaxNode TRESUsageInMaxTask TRESUsageInMin Timelimit TimelimitRaw TotalCPU TRESUsageInAve ReqTRES Reservation ReservationId Reserved ReqCPUFreq ReqCPUFreqMin ReqCPUFreqMax ReqCPUFreqGov

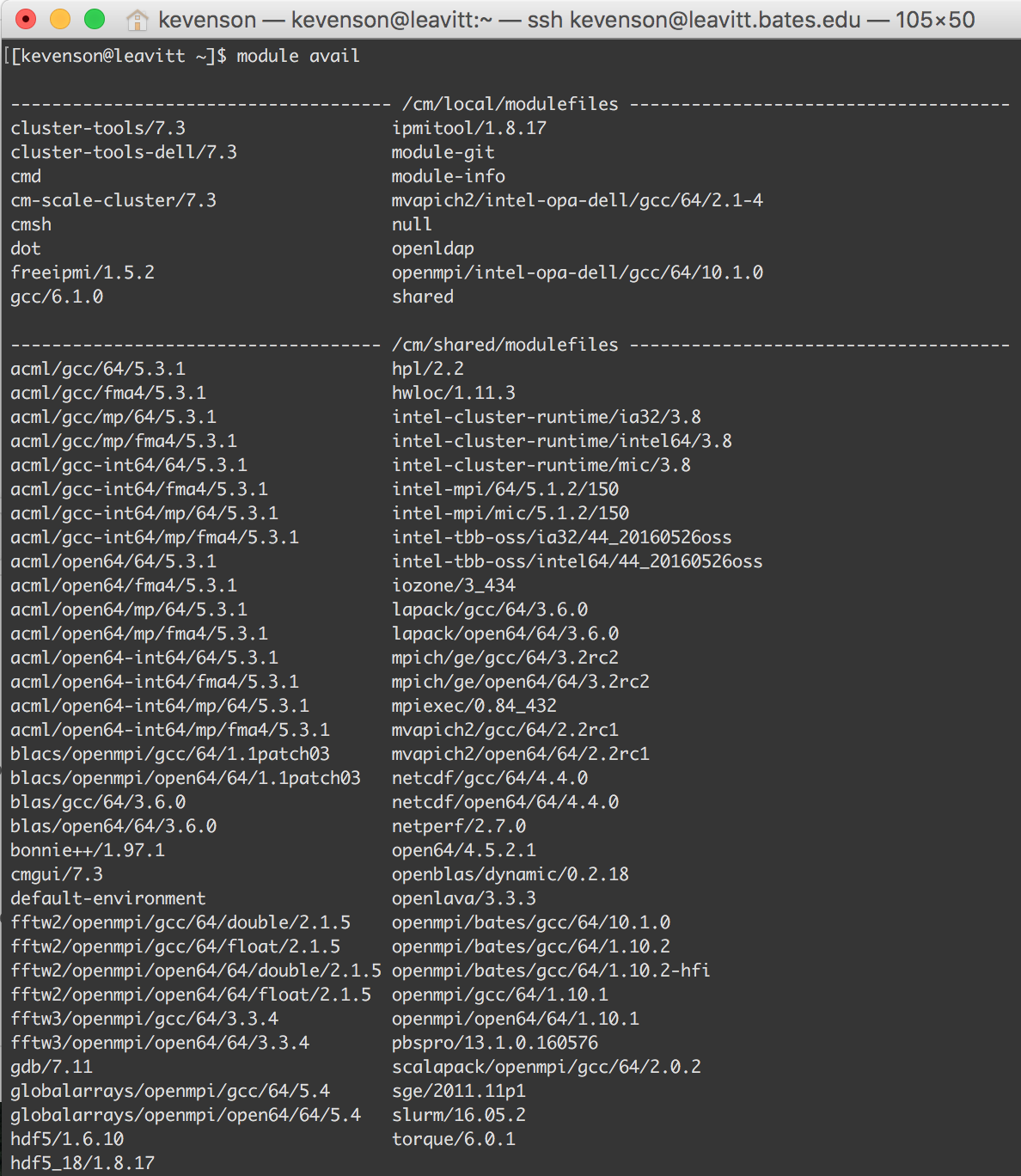

MaxVMSize MaxVMSizeNode MaxVMSizeTask McsLabel MaxPagesTask MaxRSS MaxRSSNode MaxRSSTask MaxDiskWriteNode MaxDiskWriteTask MaxPages MaxPagesNode MaxDiskRead MaxDiskReadNode MaxDiskReadTask MaxDiskWrite ~~~~~~~JobID JobName ExitCode Group MaxRSS Partition NNodes AllocCPUS StateĢ13974 test 0:0 vaduaka normal 1 3 COMPLETEDĢ15576 maxFib 0:0 vaduaka normal 1 1 COMPLETEDĢ15576.exte+ extern 0:0 88K 1 1 COMPLETEDĢ15577 maxFib 0:0 vaduaka normal 1 1 COMPLETEDĢ15577.exte+ extern 0:0 84K 1 1 COMPLETEDĢ15578 maxFib 0:0 vaduaka normal 1 1 COMPLETEDĢ15665 maxFib 0:0 vaduaka normal 1 1 COMPLETEDĢ15665.exte+ extern 0:0 92K 1 1 COMPLETEDĪveCPUFreq AveDiskRead AveDiskWrite AvePagesĬomment Constraints ConsumedEnergy ConsumedEnergyRawĬPUTime CPUTimeRAW DBIndex DerivedExitCode Clear any environment variables before calling sbatch that you don’t want to be propagated to the spawned program. Be aware that any environment variables already set in sbatch environment will take precedence over any environment variables in the user’s login environment. Tells sbatch to retrieve the login environment variables.

The values of the -mail-type directive can be declared in one line like so: -mail-type BEGIN, END, FAIL The user to be notified is indicated with -mail-user. Notifies user by email when certain event types occur. This modification has been done to implement the new backfill scheduling algorithm and it won’t affect partition wall time.ĭefines user who will receive email notification of state changes as defined by -mail-type. Jobs that don’t specify a time will be given a default time of 1-minute after which the job will be killed. Note: It’s mandatory to specify a time in your script. The acceptable time format is days-hours:minutes:seconds. A time limit of zero requests that no time limit be imposed. The default time limit is the partition’s default time limit. If the requested time limit exceeds the partition’s time limit, the job will be left in a PENDING state (possibly indefinitely). Sets a limit on the total run time of the job allocation. If not, the default setting of 500MB will be assigned per CPU. Note: It’s highly recommended to specify -mem-per-cpu. This is the minimum memory required per allocated CPU. Hence, the controller will grant allocation of 4 nodes, one for each of the 4 tasks. However, by using the -cpus-per-task=3 options, the controller knows that each task requires 3 processors on the same node. If the HPC cluster is comprised of quad-processors nodes and simply ask for 12 processors, the controller might give only 3 nodes. For instance, consider an application that has 4 tasks, each requiring 3 processors. Without this option, the controller will just try to allocate one processor per task. The default is 1 task per node, but note that the -cpus-per-task option will change this default.Īdvises the Slurm controller that ensuing job steps will require ncpus number of processors per task. This option advises the Slurm controller that job steps run within the allocation will launch a maximum of number tasks and offer sufficient resources. If not specified, the default partition is normal. Requests a specific partition for the resource allocation ( gpu, interactive, normal). If not specified, the default filename is slurm-jobID.out. Instructs Slurm to connect the batch script’s standard output directly to the filename. The default is the name of the batch script, or just sbatch if the script is read on sbatch’s standard input. The specified name will appear along with the job id number when querying running jobs on the system.